Recent AI releases have put us into a new technological era and it’s both exciting and deeply problematic

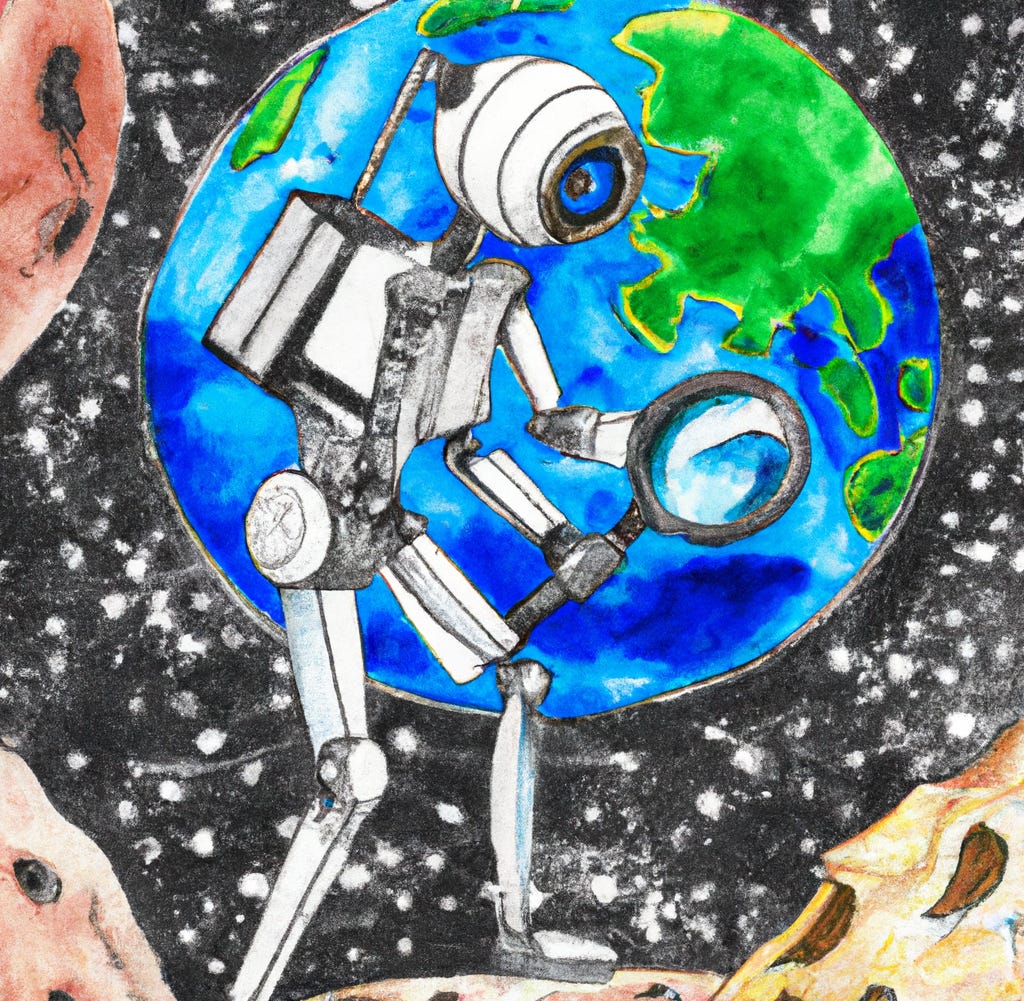

The best way forward may be to transform these AIs into digital scientists

In brief: The public release of ChatGPT has confirmed that we have now entered into a new technological era, where we have easy access to helpful generative digital agents with vast knowledge. This has the potential, for instance, to completely replace search engines such as Google with something better. This was already presaged by earlier reports of these large language models (LLMs), but public access to one of these LLMs is creating this sea change. As with technological progress throughout history, along with new capabilities come new problems. Despite at least 5 years of effort by computer scientists attempting to deal with these issues, LLMs (including ChatGPT) have a variety of biases and often mix fact and fiction in their answers. I argue that a clever way out of this quagmire could be to build digital scientists grounded in observation and reason, with valid world models for predicting the outcomes of their actions to counter their biases and align with human values. After all, science and reason have been instrumental in reducing these same problems of bias and confusion among humans over the past several hundred years.

The AI (artificial intelligence) research group OpenAI recently released ChatGPT – a large language model (LLM) trained on many billions of pages of internet content. Computer scientists have been reporting remarkable LLM feats in recent years, but ChatGPT is the public’s first chance to be in the driver’s seat with these new capabilities. On a practical level, LLMs like ChatGPT have the potential to replace internet search engines by providing answers to questions – including questions requiring generation of completely novel ideas – in easy-to-understand language. These new capabilities have entered us into a new technological era. Consistent with technological progress throughout history, along with these new capabilities have come new problems.

I think most of us imagined future AI as extremely intelligent, and that included being grounded in reality. It turns out that recent AI releases have many of the hallmarks of intelligence – such as being able to perform many novel tasks (Legg & Hutter, 2007) – but without the basic ability to tell fact from fiction. This was most dramatically illustrated by Meta/Facebook’s recent release of Galactica. This AI “scientist” would confidently explain complex scientific concepts in easy-to-understand language, write short scientific papers, and mix subtle but consequential reasoning errors into complex explanations. It turns out it was worse than having nothing, since it would state fiction with the authority of science. Luckily, Galactica was taken down within days of its public release.

But Galactica illustrates a deeper problem with all current LLMs: the lack of grounding in observation and well-calibrated reasoning. Galactica was trained on scientific content, but it was no scientist. Instead, these LLMs such as ChatGPT are strongly biased by their training data, which is not reality but rather billions of pages of text on the internet. Needless to say, the training data includes many cognitive and social biases, and research has confirmed this in turn biases LLMs.

I think it should concern us that computer scientists have known about this problem for at least 5 years, but it has yet to be solved. Indeed, ChatGPT has many “AI safety” measures in place, substantially reducing but not eliminating its social biases. For instance, after its training with internet text data, ChatGPT is apparently trained using a reinforcement learning algorithm wherein humans “reward” or “punish” the AI depending on whether the AI’s answers were offensive or not. This has helped make the AI less biased, but it has acted only as a facade on a deep flaw in the AI’s architecture. It is relatively easy to trick ChatGPT into revealing its true biased nature, and its confusion between fact and fiction.

I don’t see a way out of this quagmire without imbuing these AIs with the very thing that has raised humanity (at least in part) out of the same sort of problems: science and reason.

Science writ large is about grounding our beliefs in the reality around us. Reasoning writ large is about keeping our beliefs consistent with our grounded observations. Together they allow (most of) us to navigate confusing, biased, and contradictory places like the internet without losing ourselves to biased beliefs and ignorance.

So what are LLMs missing? First, they lack our embodiment, which cuts them off from physical reality. This lack of grounding in physical bodies deprives them of the many billions of data points we experience as children during our development. As an illustration, consider that infants are already receiving a deluge of multimodal (visual, auditory, touch, proprioceptive, taste, and smell) data upon birth. Neuroscience and psychology tell us that we are conscious of a moment about every 1/5 of a second or so, such that with 28,800 seconds (assuming 8 waking hours) we already experience 115,200 multimodal data points in the first day of life. Over 18 years (assuming 16 waking hours on average) this is 1,513,728,000 rich multimodal data points mostly grounded in physical reality. LLMs are trained at the same scale, but with zero grounding in physical reality and not in a multimodal manner (i.e., with only text data).

The pathway to effective scientist AIs is – as a start – to ground them in physical reality. This could be either actual physical reality using robotics, or the perhaps more practical option of using highly realistic simulations of physical reality. Only with grounding in physical reality would the text data they are later trained with have the same meaning as it does to us physically grounded beings. This would of course make our interactions with scientist AIs more natural and intuitive. More importantly, however, this grounding in physical reality would be a gateway to grounding their knowledge in empirical truth.

This is only the first step. After all, humans learn through physical grounding yet many humans are biased or confused on many topics. Historically, scientists working in a community that prioritizes evidence and healthy skepticism have been most well-calibrated to reality. Evidence for this comes, of course, from the evidence these scientists discover themselves. In case there is some sort of hidden bias here from a community evaluating itself, further evidence comes from ubiquitous practical applications of their discoveries that decisively demonstrate that their scientific community learned something new and important – things like electricity (see Michael Faraday), digital computers (see Alan Turing and John von Neumann), and vaccines (see Edward Jenner and Louis Pasteur).

I am suggesting that we should instill the core principles of well-calibrated scientific communities – prioritization of empirical evidence, valid logic, and healthy skepticism – in a community of scientist AIs.

This would, if approaching human-level scientific reasoning processes, create the necessary evaluative feedback loop necessary to prevent AIs like ChatGPT from spinning off into the land of make-believe on any given topic. Further, it could create a virtuous cycle of empirical verification and discovery that could expand human knowledge along with the knowledge of the scientist AI community.

Until ChatGPT and similar digital agents are properly calibrated by science and reasoning I recommend remaining skeptical of what they tell you, perhaps using their suggestions as a starting point (rather than an end point) for your research.